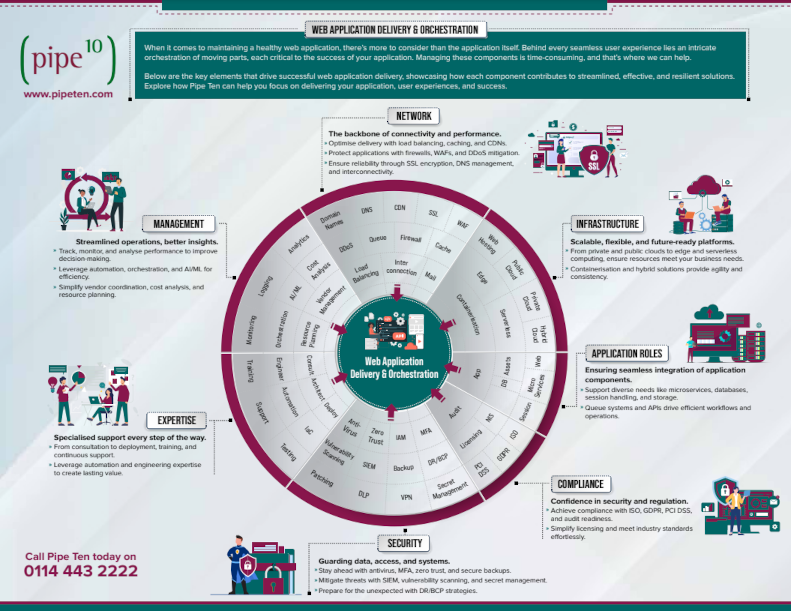

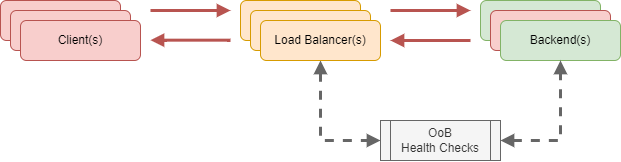

A load balancer is a device or software that distributes network or application traffic across a number of backend servers. Its primary purpose is to enhance the reliability and performance of applications, websites, databases, and other services by distributing/balancing the workload.

Problems load balancers need to solve

The availability of an application shouldn’t rely on the availability of any single backend web server.

The performance of an application shouldn’t be impacted when demand exceeds the performance/resource capacity of any single backend web server.

The application’s technology choices shouldn’t be limited by the ability to support all applications on any particular backend OS or platform.

The security of an application should be capable of evolving to emerging standards.

The front end needs to out scale the backend in all these respects.

Different types of load balancers

DNS load balancing works by having multiple DNS records which direct to different servers. This is an easy way of adding capacity to a solution however does not provide redundancy in a case of failure.

Horizontal scaling & redundancy are important for web solutions. Although DNS load balancing is convenient, we cannot consider it for an enterprise solution. Due to this we have focussed on other load balancer types, such as layer 7 implementations.

A layer 7 reverse proxying load balancer generally works by:

- Client makes a request to the load balancer.

- Load balancer uses an algorithm (like round robin, least connections, or IP hash) to decide which backend server to route the request to (load balancing).

- The chosen backend server processes the request and returns the response to the load balancer (reverse proxying).

- The response is delivered back to the requesting client.

There are plenty of options to consider for reverse proxying load balancing from software packages such as Caddy, Zevenet, NGINX, Pound, HAProxy or Traefik vs. SaaS / Public Cloud offerings such as Cloudflare, Elastic Load Balancing (AWS), TPLB (GCE) and Application Gateways (Azure). All have their own unique benefits and problems.

Why do we preference NGINX?

We decided NGINX best fit the majority of our needs and while we’re open to changing this in the future it meets all of our short-medium term needs namely:

Platform Independent

Choosing a software such as NGINX which is not tied to any specific platform or OS (opposed to a vendor tied service like ELB) allows us to be completely flexible and ensure all customers can benefit from the commonality of software irrespective of provider. This independency lends it’s self to automation platforms like Ansible where we can automate deployment of software and configuration without the complexity of other solutions.

Mature

Released way back in 2004 not long after Pipe Ten was founded, NGINX has evolved into the mature, tried-and-tested solution we know and love today.

Transparent & Extensible

The beauty of NGINX being open source software means we can benefit from a huge userbase. We benefit from others contributions, make our own contributions and look at the underlying code when something goes wrong. There’s also some solace in knowing the source code has been reviewed for exploit many times and so theoretically less likely to receive 0day exploits.

Coherent & Granular

We like how literal NGINX configurations are, making them easy to read, understand, extend and troubleshoot. Configurations are a pleasure to work with, whether that’s manually or through automation platforms like Ansible. The ability to granularly control the routing of traffic to different backends based on the client or the request is a huge benefit permitting customers to develop mixed technology applications under the same hostnames (for example customer.ext/page.php can be routed to an nginx web server running php-fpm, customer.ext/page.aspx can be routed to a different web server running dotnetcore, customer.ext/application/ can be routed to a specific docker application container and customer.ext/language/ can be routed to a specific localised application or website).

Modern

NGINX has reliably adopted new web technologies in a timely and stable manor. NGINX currently supports TLS 1.3, Websockets, HTTP 2, and more. HTTP/3+QUIC support is right around the corner and already has alpha versions available for testing.

Versatile

NGINX is feature-rich by default. For example, the reverse proxying functionality is not limited to HTTP(S), it can proxy generic UDP/TCP traffic too allowing for NGINX to be used in a massive range of scenarios.

Performant

We aren’t going too deep into NGINX performance today (keep an eye out for future insights where we may delve deeper into this comparison), but to summarise; we’re extremely confident with NGINX ability to perform when we need it to.

Conclusion

To conclude, NGINX stood out as a solid choice for a load balancer / reverse proxy. It checks all of our boxes, and allows for us to scale and offer a wide range of functionality all under a single service. Many of the other services we reviewed internally allowed us to provide similar functionality but each come with their own pro’s and con’s. We found many interesting features in other services and may include these findings in future posts, so stay tuned!

Author: James Clarkson

James’ meticulous approach to application and infrastructure development has been fundamental to the successful launch and delivery all customer’s solutions. James originally joined Pipe Ten whilst studying at University and quickly rose through the forefront to lead the development and integration of new technologies that Pipe Ten’s customers rely on every day as the Head of Platform Engineering. A stalwart advocate for digital accessibility, performance and security, his industry knowledge and awareness in the technology sector is second to none.